It Pleases

It Pleases started in 2023 as a series of acrylic paintings on flat canvas board. They are documented on itpleases.com and available for purchase on Etsy.

It Pleases started in 2023 as a series of acrylic paintings on flat canvas board. They are documented on itpleases.com and available for purchase on Etsy.

To help shoppers make informed purchase decisions quickly, this gadget uses a barcode scanner and visual feedback in the trolley handle. My role was to assist the researchers at UCL with the implementation of an early prototype using Arduino and Processing. The version I worked on featured 16 LEDs embedded in the handlebar. The video shows a later version with an embedded screen. Check out the video and the article by Fast Company

Named after the fabulous Elm programming language, this game challenges two players to a hilarious fight to the death. The goal was to create a fun game that needs no color, sound, or complex shapes. Using the most minimalistic avatars (circles) and environment (a rectangle) imaginable, the game exposes the visceral experience between humans when it comes down to the basics. After a minute, are you still looking at a circle or straight into your opponent's soul?

P.S.: I was thrilled to learn that the game has been used to teach programming to kids in Oslo!

In collaboration with Intel and University College London (UCL), I created batslondon.com to make realtime wildlife data available to the general public. The purpose of the website was to visualise, in realtime, the location and frequency of bat calls as they were detected by AI-powered ultrasound sensors in the park. While the sensors and the website are no longer active, you can read all about the project in the BBC article and on Nature-Smart Cities

This game allows children to collaborate on making silly sentences together, using four tablets and a projector. The tablets allow them to replace different parts of the sentence, and the projector shows the result in realtime. Live Sentence was developed during my time as a visiting researcher at ChaTLab University of Sussex. It was open to the general public at Brighton Science Festival.

For a series of Sustainability Leadership training workshops at the University of Cambridge, I designed a multi-tablet game that allowed participants to collaborate on simulated climate change scenarios. The interfaces were implemented in H order to link up implementation required a robust and responsive network of tablets and projectors was critical to the success of the system. It was realized using custom-built server software written in Processing, HTML/Javascript interfaces on the tablets, and a dedicated WIFI router in the room. The game was a huge success with participants and later became the core of my PhD thesis on designing collaborative learning activities.

A study in information density made with Csound and the fabulous

MARY TTS

speech generation library.

This is a stereo version of the original 4-channel piece.

Enjoy the whole trilogy on SoundCloud

This interactive tabletop application allows groups of up to 4 tourists plan an enjoyable day out in Cambridge. It was designed and evaluated by researchers at The Open University and published as a full research paper at the CHI conference in 2011. I take credit for the implementation (Processing).

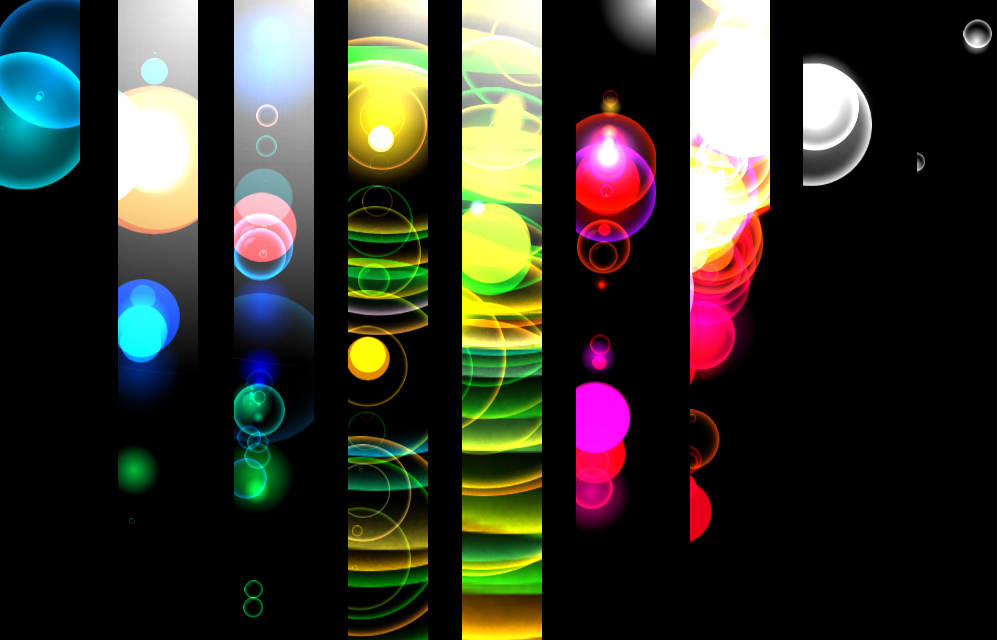

When jazz legends Carla Bley and Steve Swallow played at Philharmonie Essen, Germany, in 2009 I had the honor to design live visuals for the event. A custom-size projection screen (8 meters) was commissioned and I brought artists Henrik Lippke and Thamya Rocha into the team. Together we developed a tool (Processing, Pure Data, and multiple MIDI controllers) to generate free-floating bubbles that could move individually or in dynamic formations, adapt to the music, change their shapes and colors, and leave trails. Following Thamya's artistic direction, Henrik and I performed the visuals along with the music, focusing on very slow-moving systems of color and light. The video shows the entire concert in fast forward.

Real-time animated fractals surround the audience on a winter evening. Shaped as human figures, roads, or trees, they react to the sound of the audience and occasionally morph into each other. Why shouldn't legs be seen as branches or forks in the road? What makes a forest different from a crowd? Is it just a matter of scale and angle? This work playfully reflects on the concept of self-similarity by extending it from the individual (fractal) object to the boundaries between types of objects as well as story elements. The installation took place at an art college surrounded by trees and roads. Implemented in Processing.

This short video shows a few examples of my visual work in the early 2000s, including commissions and live performances. Everything was created using open-source tools, including OpenFrameworks, Processing, Blender and ImageMagick.

"Diktator ohne Land" was a studio band active in the early 2000s. Its only album, "Zimt-Artillerie," was recorded almost entirely in my student bedroom studio and still brings me joy when I listen to it. The name "Diktator ohne Land" was inspired by the Dalai Lama and "Zimt-Artillerie" was inspired by a harmless baking accident.